Recently I was asked to join the recruitment process and onboarding of new members. As the team and our project grow both in size and complexity, our document to hand on projects is complicated and only work in certain operating system. So to make sure the onboarding process is fast and reproducible, I have to come up with a new plan to create an isolate enviroment for coding and less learning curve as possible.

My goal

An isolate enviroment where every member get their own resource and custom preinstall package

Our project in Jobhopin combines multiple languages (Rust, Python, …) and the process of creating virtualenv is quite tedious with multiple attempts to make it work. Usually, It took 2-3 weeks for newcomers to learn about our current projects and work effectively

Why not use containers image?

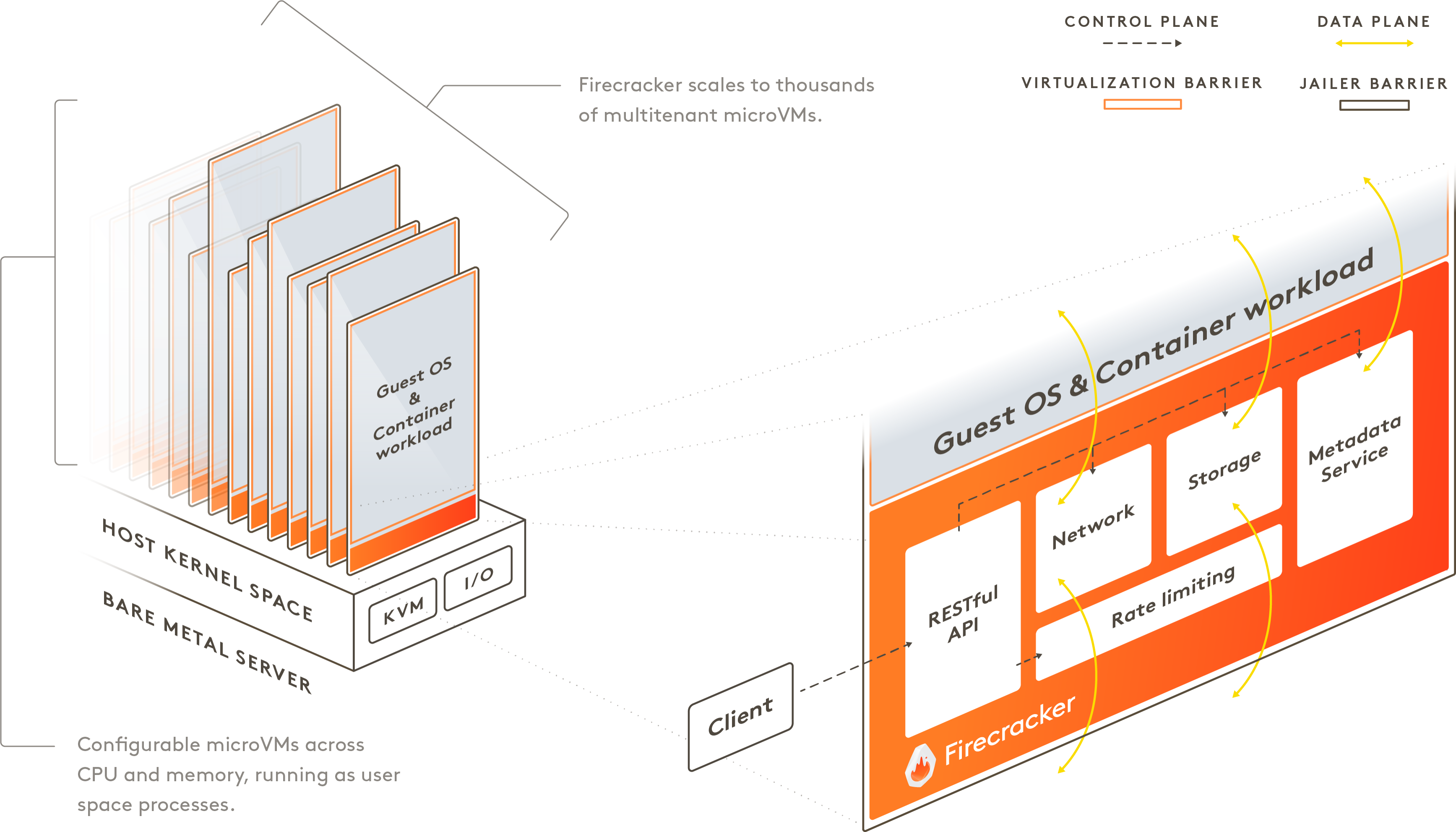

Firstly I encourage the team to use Docker and docker-compose to code and debug projects but our team has both engineer and scientist members. The science team find it hard to debug in docker and took a lot of time for new members to learn and make use of docker’s image. Furthermore I wanted to mimic a real production machine that the member has root access to – wanted folks to be able to set sysctls, install new packages, make iptables rules, configure networking with ip, run perf, basically literally anything with strong isolation.

Why not use virtual machine?

I’ve tried some VM vendors (Qemu and Vmware) to create per VM per member but too many problems in the process:

- VM boosting time is slow plus the snapshot size is too large

- Lack of API and the snapshot VM have to be manual created without any reproduce code

I want our members only need to provide their credentials with custom VM size and instantly launch a fresh virtual machine.

Firecracker can start a VM in less than a second with base OCI container

Initially when I read about Firecracker being released, I thought it was just a tool for cloud providers to use that provide security rather than bare docker, but I didn’t think that it was something that I could directly use it to create a dev VM.

After a few reading information, I just impress with how fast and convenient Firecracker is in boosting VM

Some comperations between Firecracker and QEMU

Firecracker integrates with existing container tooling, making adoption rather painless and easy to use. I choose to use Ignite that CLI command is very similar to docker

ignite run instead of docker runHow to use Firecracker with ignite

Install ignite and start a fresh VM is very simple, there’s basically 3 steps:

Step 1: Check your system is enable KVM virtualization and install Ignite in here Installing-guide

$ ignite version

Ignite version: version.Info{Major:"0", Minor:"8", GitVersion:"v0.10.0", GitCommit:"...", GitTreeState:"clean", BuildDate:"...", GoVersion:"...", Compiler:"gc", Platform:"linux/amd64"}

Firecracker version: v0.22.4

Runtime: containerd

Step 2: Create a VM sample config.yaml

apiVersion: ignite.weave.works/v1alpha4

kind: VM

metadata:

name: haiche-vm

spec:

cpus: 2

memory: 1GB

diskSize: 6GB

image:

oci: weaveworks/ignite-ubuntu

ssh: true

Step 3: Start your VM server under 125 ms

It takes <= 125 ms to go from receiving the Firecracker InstanceStart API call to the start of the Linux guest user-space /sbin/init process

$ sudo ignite run --config config.yaml

INFO[0001] Created VM with ID "3c5fa9a18682741f" and name "haiche-vm"

Wolla 🎉 🎉 🎉 you’ve succeedfully created a new VM. To list the running VMs, enter:

$ ignite ps

VM ID IMAGE KERNEL CREATED SIZE CPUS MEMORY STATE IPS PORTS NAME

3c5fa9a18682741f weaveworks/ignite-ubuntu:latest weaveworks/ignite-kernel:5.10.51 63m ago 4.0 GB 2 1.0 GB Running 172.17.0.3 haiche-vm

Once the VM is booted, it will have its network configured and will be accessible from the host via password-less SSH and with sudo permissions

SSH into the VM

Via ignite cli

$ ignite ssh haiche-vm

Welcome to Ubuntu 18.04.2 LTS (GNU/Linux 5.10.51 x86_64)

...

root@3c5fa9a18682741f:~#

To exit SSH, just quit the shell process with exit.

Via ssh cli and rsa key

add your public key to ~/.ssh/authorized_keys in new boosted VM or update config and create new VM with default path to public key

spec:

...

ssh: path/your/id_rsa.pub

and then ssh to your VM

$ ssh -i path/your/id_rsa root@172.17.0.3

Welcome to Ubuntu 18.04.2 LTS (GNU/Linux 5.10.51 x86_64)

...

root@3c5fa9a18682741f:~#

Secure bastion host

Final wrapping with bastion host technique to fully secure ssh to VM host

~/.ssh/config

Host workstation

HostName 192.168.1.15

User haiche

IdentityFile ~/.ssh/id_rsa

Host haiche-vm

HostName 172.17.0.3

ProxyJump workstation

User root

IdentityFile ~/.ssh/id_rsa

Add the config above to your ssh folder and run ssh haiche-vm to access VM host from your client

How I extend container to reduce repeatable setup process

After successfully creating VM, mostly I will install conda and some packages to run my project. But I don’t want to repeatedly install conda and create a new environment each time I create VM. Here is my step to extend the base Ubuntu image and use it to create a better VM experience

Dockerfile

FROM weaveworks/ignite-ubuntu

ENV PATH="/root/miniconda3/bin:${PATH}"

ARG PATH="/root/miniconda3/bin:${PATH}"

RUN apt-get update -qq && \

apt-get update -y && \

apt-get install git vim rsync \

build-essential curl -y

RUN wget \

https://repo.anaconda.com/miniconda/Miniconda3-latest-Linux-x86_64.sh && \

mkdir /root/.conda && \

bash Miniconda3-latest-Linux-x86_64.sh -b && \

rm -f Miniconda3-latest-Linux-x86_64.sh

RUN conda create -n haiche python=3.7

SHELL ["conda", "run", "-n", "haiche", "/bin/bash", "-c"]

RUN conda install fastapi

RUN which python && python -c "import fastapi"

RUN conda init bash && echo "source activate haiche" >> ~/.bashrc

miniconda-vm.yaml

apiVersion: ignite.weave.works/v1alpha4

kind: VM

metadata:

name: haiche-minconda-vm

spec:

cpus: 2

memory: 1GB

diskSize: 6GB

image:

oci: haiche/ubuntu-minconda

ssh: path/your/id_rsa.pub

Here are some tricky parts, the current ignite doesn’t support local docker image build. I have to push the image to the public register Docker Hub to successfully import ignite’s image. To start a new VM

$ sudo ignite run --config miniconda-vm.yaml

...

INFO[0002] Created image with ID "cae0ac317cca74ba" and name "haiche/ubuntu-minconda"

INFO[0004] Created VM with ID "c1ab652804e664ed" and name "haiche-minconda-vm"

If you use a private registry such as ECR, run the command above with --runtime=docker to pull the private registry

Test our new VM with conda environment

$ ssh -i path/your/id_rsa root@172.17.0.4

...

(haiche) root@c1ab652804e664ed:~# python

Python 3.7.13 (default, Mar 29 2022, 02:18:16)

[GCC 7.5.0] :: Anaconda, Inc. on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> import fastapi

>>> fastapi.__version__

'0.74.1'

>>>

I liked the configuration file approach so far and it is easier to be able to see everything all in one place. Now the member simply provides the config file and public key to create a fresh VM with all needed environments in instant

Cloud supports nested virtualization

Another question I had in mind: “ok, where am I going to run these Firecracker VMs in production?“. The funny thing about running a VM in the cloud is that cloud instances are already VMs. Running a VM inside a VM is called “nested virtualization” and not all cloud providers support it – for example, AWS only supports nested virtualization in Bare-metal instances which are ridiculously high prices.

GCP supports nested virtualization but not on default, you have to enable this feature in creating VM section. DigitalOcean support nested virtualization on default even on their smallest droplets

Some afterthought

A few things still stuck in my mind with this approach:

-

Currently firecracker doesn’t support snapshot restore but will support in near future https://github.com/firecracker-microvm/firecracker/issues/1184

-

Can’t easy upgrade base image like

docker pull. I was dealing with this by making a copy every time, but that’s kind of slow and it felt really inefficient. But there’s some solution online that I will try later Device mapper to manage firecracker images -

I don’t know if it’s possible to run graphical applications in Firecracker yet

-

Firecracker with Kubernetes is a new thing but I don’t find it appealing cause using Pod to group containers is already fast and secure. Some people gave me this useful thread discuss about why aren’t they compatible yet

I often hear people ask why Kubernetes and Firecracker (FC) can’t just be used together. It seems like an intuitive combination, Kubernetes is popular for orchestration, and Firecracker provides strong isolation boundaries. So why aren’t they compatible yet? Read on 🧵

— Micah Hausler (@micahhausler) March 13, 2020

Here are some links I found useful when researching about Firecracker:

-

How AWS Firecracker works: a deep dive that demonstrates some of the concepts with a tiny version of Firecracker

-

AWS Fargate and Lambda was back by Firecracker serverless computing

-

Comparing other isolation technique Sandboxing and Workload Isolation

Комментарии